The model, a model...

Totally forgot to include a few words about models in the previous post. So, a separate post.

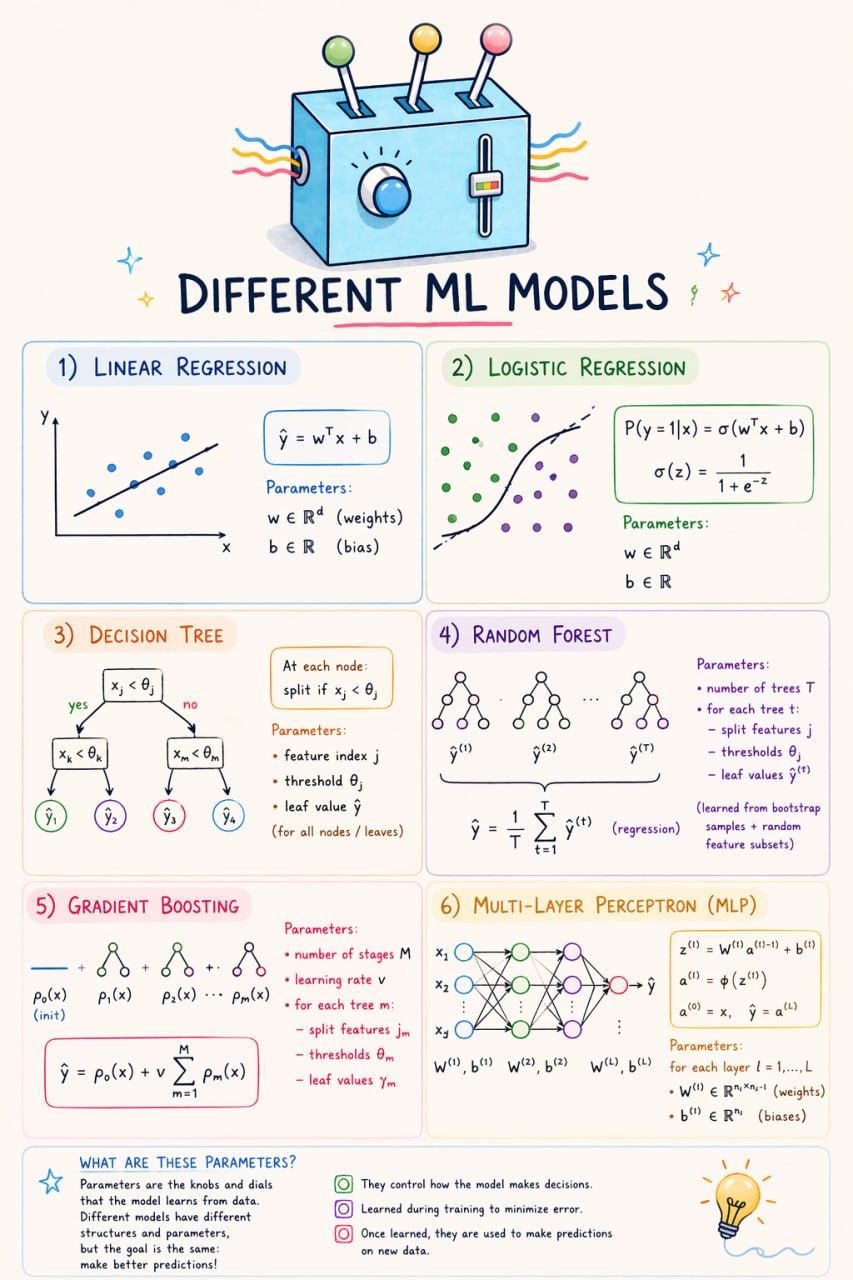

The picture is quite traditional. Formally speaking, a model is a function f = f(x, α). In our terms, x is the values of features for an object, and α is the parameters. All our knobs and handles that we can adjust in order to fit the dataset.

Of course, you know the linear model y = kx + c. It has parameters k and c. You have probably also heard about decision trees. There, the parameters are thresholds in the nodes and values in the leaves. In neural networks, parameters are the numbers in the weight matrices.

All these complex things are already common and simple. For me personally, it was quite a fresh idea that each feature itself is a model. So you can estimate its quality individually using ROC AUC, MSE, or anything else you like. It immediately gives you an idea of how important a feature may be, without training complex models and without SHAP analysis.

From this point of view, feature engineering like family = sibsp + parch + 1 can be considered a kind of stacking of models.

And finally. [0.23, -1.18, 3.25] looks like a normal set of model parameters. But what if I tell you that ((1 x) ((1 1) x)) is a model description as well? Let’s discuss it next time.