A short update on GBDTE presentation.

The die is cast. I set the date for my presentation on Gradient Boosted Decision trees. It's Jan 15. I'm aiming for a 45 minutes presentation, so like 30 slides to make. The timeline isn’t actually tight, but there’s no opportunity to do nothing either.

So I decided to stop here, where I am now, and work on the presentation. The current point is:

🌳there is an efficient multithreaded Golang implementation with python bridge

🌳there is a synthetic MSE dataset

🌳there is a synthetic LogLoss dataset

datasets demonstrate that the model is working and can handle hundreds of thousands of records

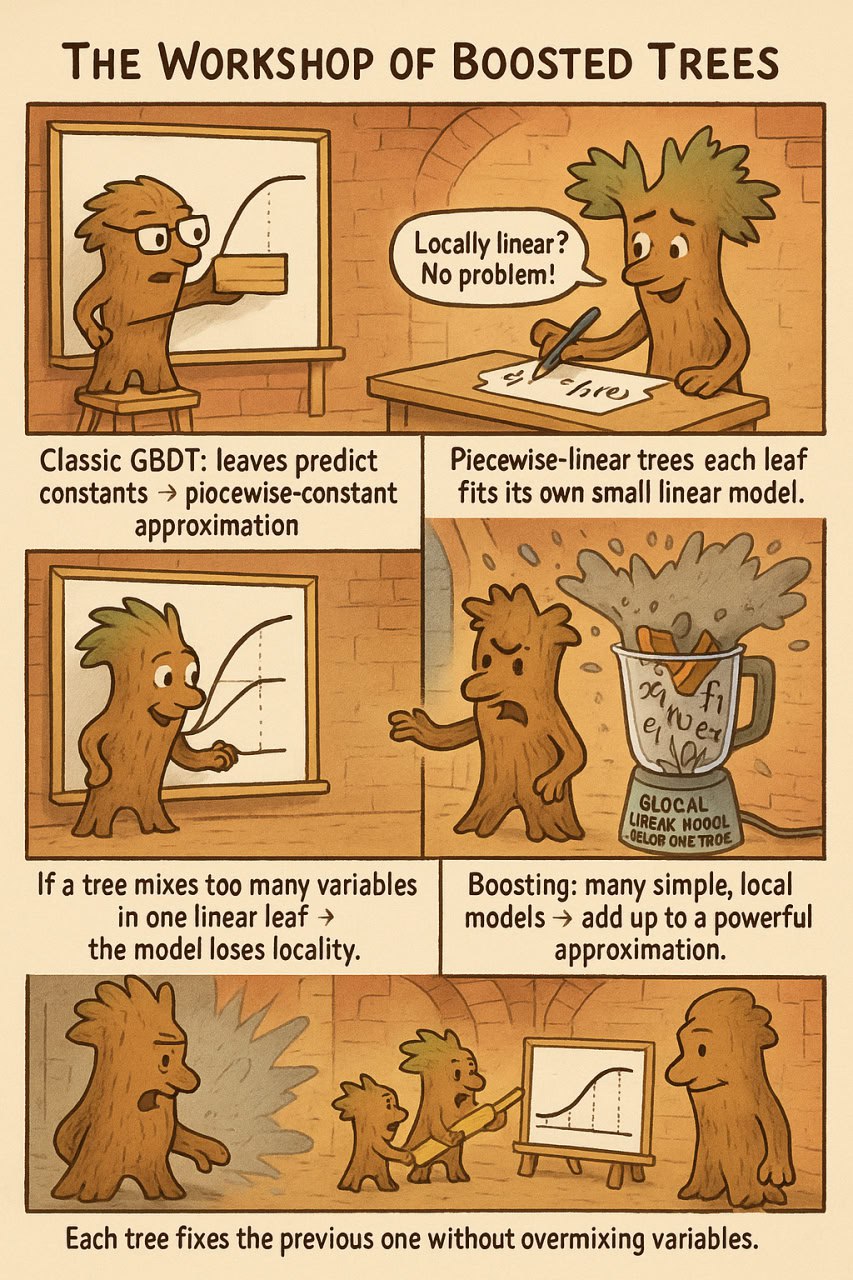

The crazy picture is about my predecessors:

🌳2017 LinXGBoost: Extension of XGBoost to Generalized Local Linear Models arxiv

🌳2019 Gradient Boosting with Piece-Wise Linear Regression Trees (GBDT-PL) — IJCAI arxiv

🌳2023 Fast Linear Model Trees by PILOT arxiv

🌳2024 PINE / PINEBoost: Efficient Piecewise-Linear Trees for Gradient Boosting arxiv

I started to think about them because there is quite an interesting thing. There are papers in which the authors try to mix extrapolating and interpolating features. My point is that it is not a good idea, because it breaks a fundamental idea behind this method: group objects with one type of feature and capture trends with another.

My inadvertent experiment demonstrated that the performance drops when you do it. And I think I know how to explain why.