Credit scoring problem statement

In the previous article, I mentioned the credit-scoring task and noted that results can be unstable and model quality degrades over time.

Let’s dive a little deeper. When I try to recall the algorithm details and share the math, I can’t help adding a few side notes—like using Oracle PL/SQL for dataset preparation. I hope that’s interesting too.

When we need to decide whether to give a loan to an applicant, we have their application. We can find their previous applications and calculate a bunch of factors:

- how many different mobile phone numbers they used;

- how many different street names were mentioned;

- and so on.

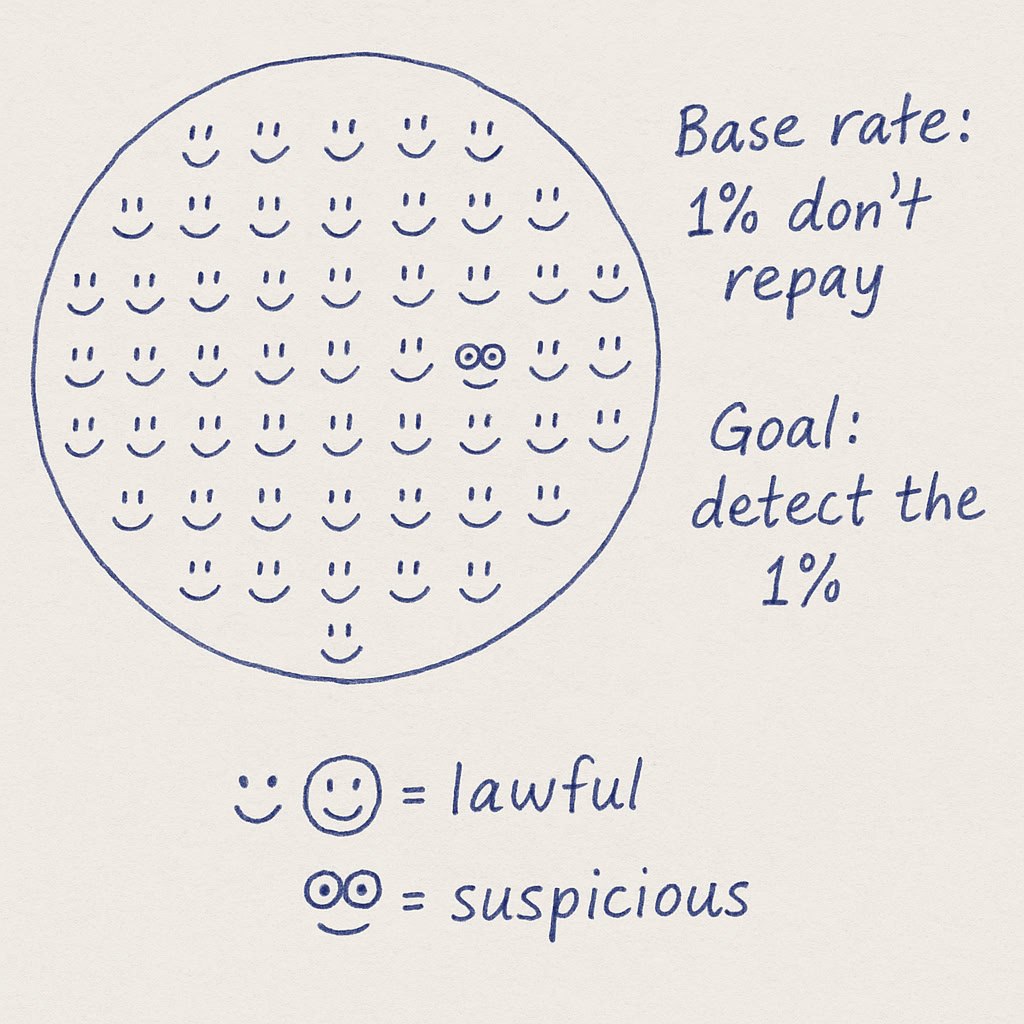

Gradient Boosted Decision Trees (GBDT), in a nutshell, divide our customers into groups by thresholding features and assign each group a constant score. A high score means the group is more likely to default; a low score suggests reliable customers who will repay.

The problem is that factors change their strength—and even their meaning—over time.

Take the feature “exactly three phone numbers in a user’s history.” We checked three consecutive years: 2014, 2015, 2016. The average fraud rate was 1% in each year. We ran a simple uplift check: selected the group with exactly three phone numbers and computed the average target value for each year. Results:

- 2014: 1.5%

- 2015: 1%

- 2016: 0.5%

In 2014, this factor was solid evidence of higher fraud risk. In 2015, it became neutral. In 2016, its polarity reversed: it suggested a more lawful customer.

Next time I'm going to talk about dataset preparation and why I'm not happy with Oracle PL/SQL.