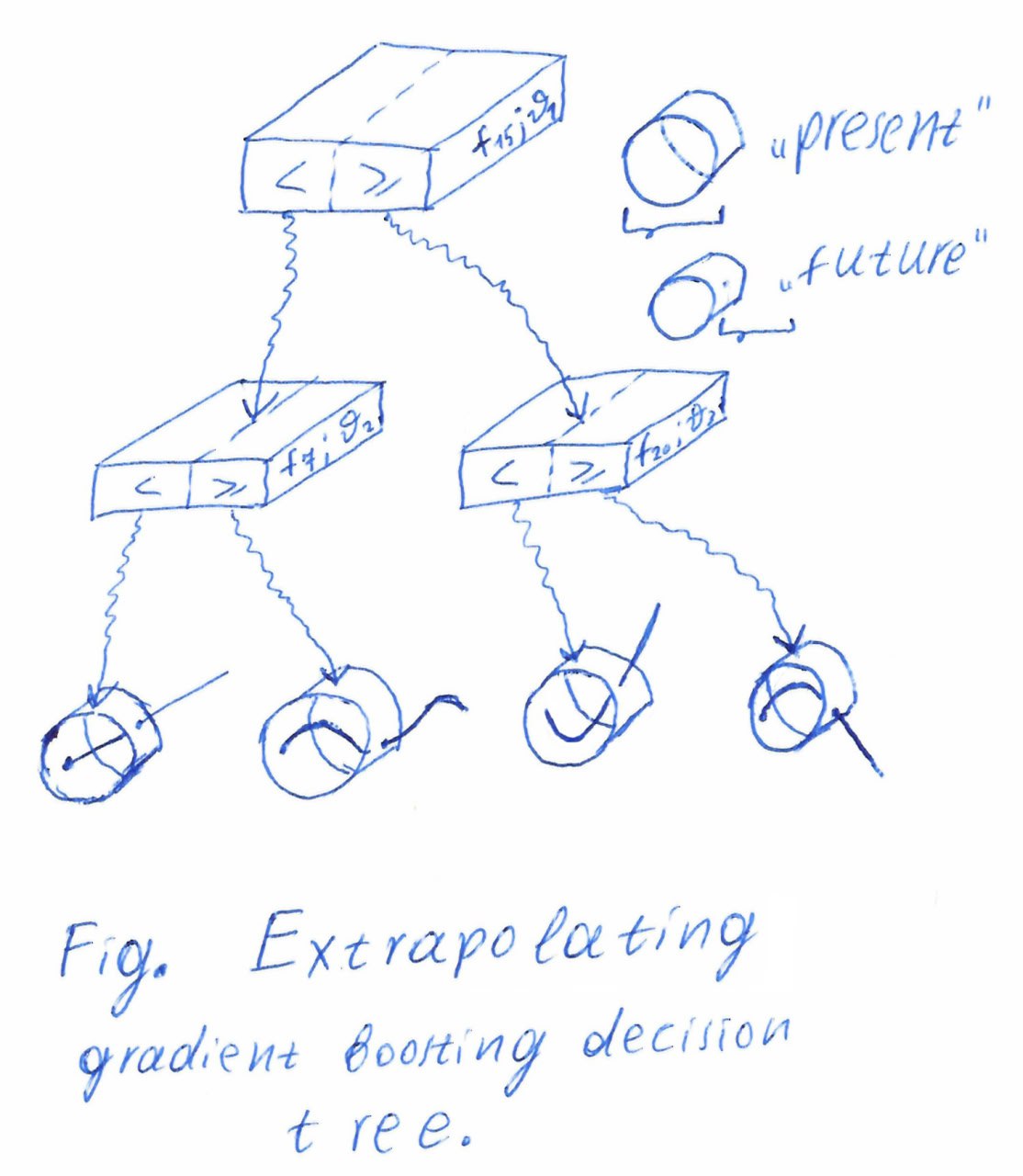

Here it is— a historical picture of a modification my friend and I made to gradient-boosted decision trees. It all started at Equifax. In 2016—almost ten years ago—I tried to become part of a credit-scoring project. At the time, the well-known XGBoost package (2014) was only two years old, and everyone was trying to apply it in their domain. In credit scoring it gave quite a boost, especially compared with linear-regression models, which are widely used in banks for their interpretability. But GBDT models quickly degrade because of the dynamic nature of the fraud market. The picture shows the main idea: put extrapolators in the leaves of the GBDT, turning it into an extrapolator rather than an interpolator, unlike the majority of well-known ML models.

Here it is— a historical picture of a modification my friend and I made to gradient-boosted decision trees. It all started at Equi

October 15, 2025