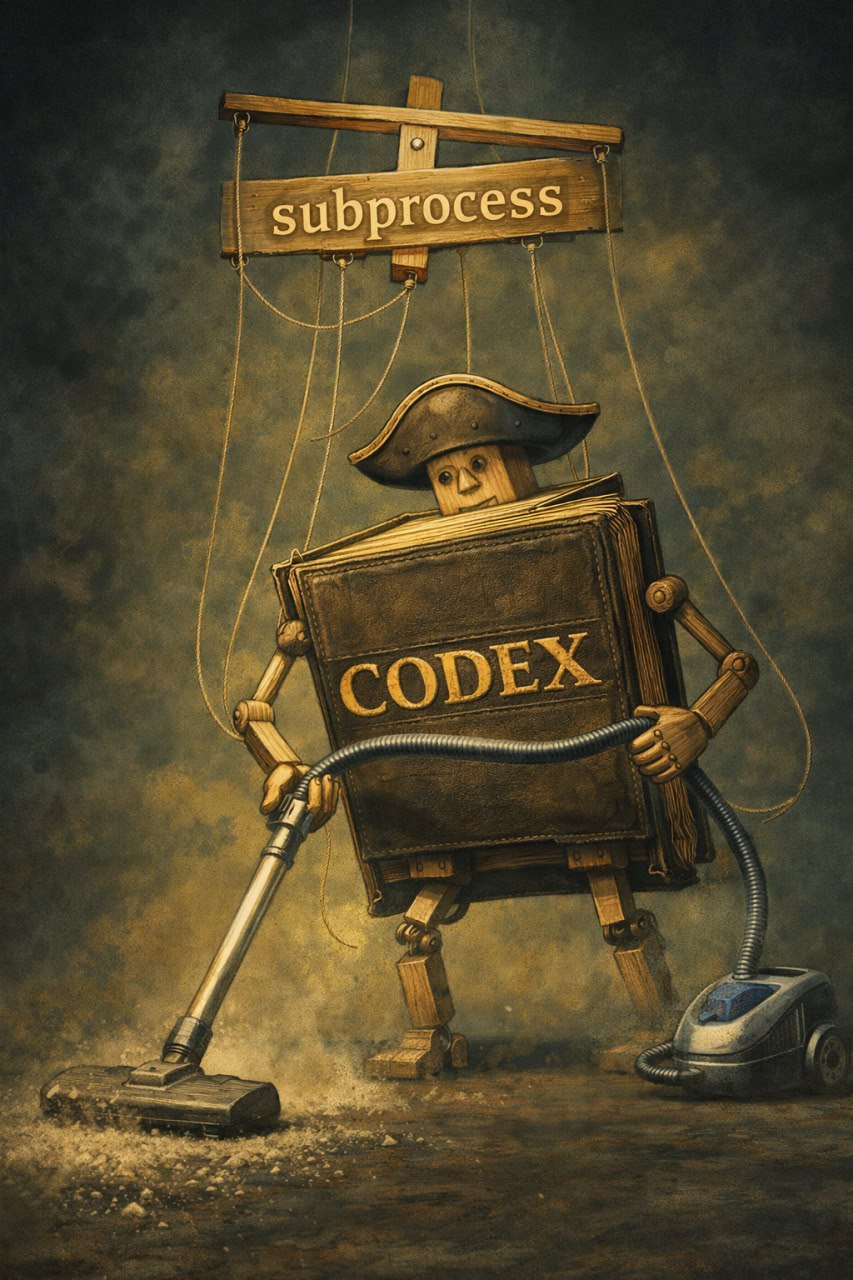

Marionette Codex

Today I want to share one technical insight.

Suppose you are building your own project and you want to add an agent into it. At first glance, it sounds simple: send a prompt, get an answer, repeat, call a tool.

But very quickly it turns out that writing your own harness is not simple at all.

A useful agent must be able to inspect files, write tiny code snippets, run commands, patch something, continue the conversation, call tools, and do all this in a secure and flexible way. Supporting such a thing yourself is a hell on Earth.

The nice trick is: you do not need to write all this stuff yourself.

You can just call Codex with a few flags. OpenAI documents codex exec as the non-interactive mode for scripts and automation, --json for machine-readable JSONL events, and codex exec resume for continuing an earlier automation run.

Something in this spirit:

codex exec --json --skip-git-repo-check --model <model> "<prompt>"

Then on the next turn:

codex exec resume <thread_id> --json --skip-git-repo-check --model <model> "<prompt>"

This leads to a very neat architecture:

one turn = one subprocess materialization

state = session id stored by Codex outside your app

control loop = fully yours

And this is the part I like most.

You reuse the whole safety and tool-calling machinery already built into Codex, while still having total control over the agent loop in your own application. By default codex exec runs in a read-only sandbox, while --full-auto switches to a lower-friction mode with approvals on request and workspace-write sandboxing.

So the agent is not some giant subsystem embedded into your codebase.

It is just your loop around Codex, with Codex keeping the conversation state on its side.

If I had to compress the whole idea into one sentence:

Use Codex as an external agent engine: launch it turn by turn, keep the session id, and build your own loop around it.

UPD: community shared a piece of knowledge. One can use agentic SDK for such things. Will try. Stay tuned.