The ideal model. Part 1.

I procrastinated on this topic for some time. Let's at least start this conversation.

What's the problem?

I have a synthetic dataset, so I know the rules of how it was built. There is one model trained on this dataset: GBDTE. I have logloss and ROC AUC scores as results of this training. But I want to know whether it's good or bad. I need a reference. And the reference is The Ideal Model.

I asked ChatGPT to come up with an approach to this problem and the iron friend wrote down some expressions. I honestly think that the main judge in ML is the cross-validation score and score is great, so the expression is not total rubbish.

But also I want to understand what's behind these expressions, how they were derived.

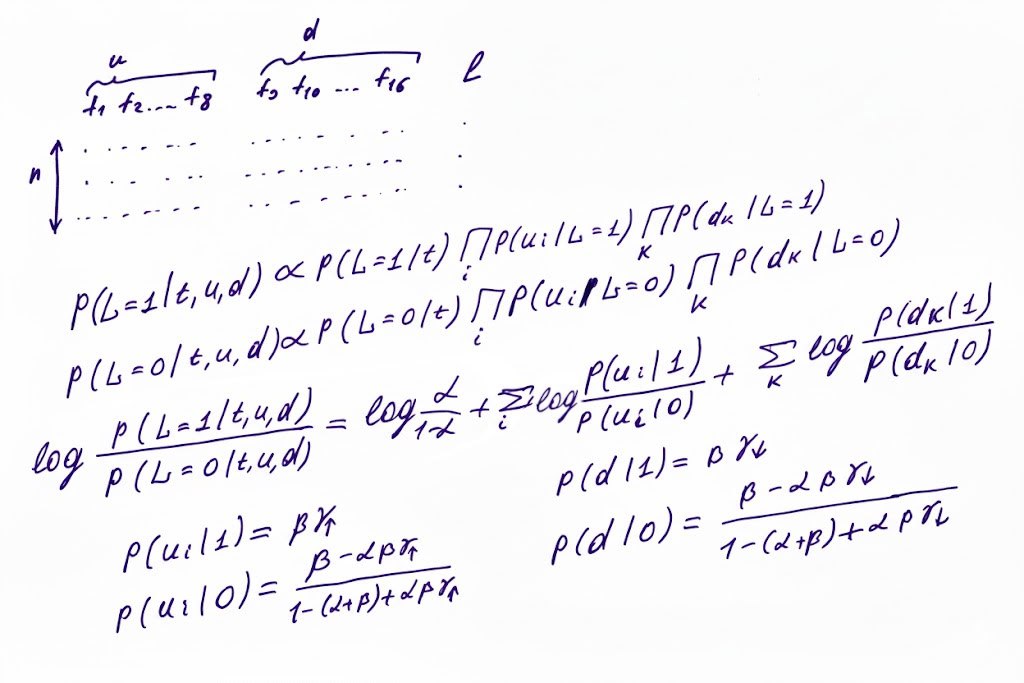

Let's check the picture. First of all, we are using u1...u8 for f1...f8 because uplift increases with time. d1...d8 for f9...f16 because uplift of these features goes down. Then we start building a discriminator between the cases L=0 and L=1. We write down the proportionality for probability of target 1 with a given set of factor values and the same for target 0. Then the score that discriminates between 0 and 1 is the logarithm of the probabilities ratio. This expression can be calculated using the conditional probabilities of the factors given the target. I assume that the whole thing is Bayes' theorem (with multiple variables).

The set of probabilities of u and d under the condition of target value is exactly what makes this dataset so special: expressions with drifting uplift. These expressions are like laws of physics for the virtual universe in which this dataset exists. Therefore we are trying to come up with the best prediction in this virtual universe.

I assume that the next step is substituting the conditional probabilities into the log expression. I'm going to check it — stay tuned.

P.S. The proper name for this approach is a Naive Bayes log-odds derivation.