EGBDT Logloss - Learning curves

There were already two posts about the synthetic LogLoss dataset. The latest. Let's discuss an experiment with this dataset.

The dataset has two groups of static features:

📈f1…f8: features with increasing uplift

📉 f9..f16: features with decreasing uplift

And there are extra features [1, t] to capture bias and trend.

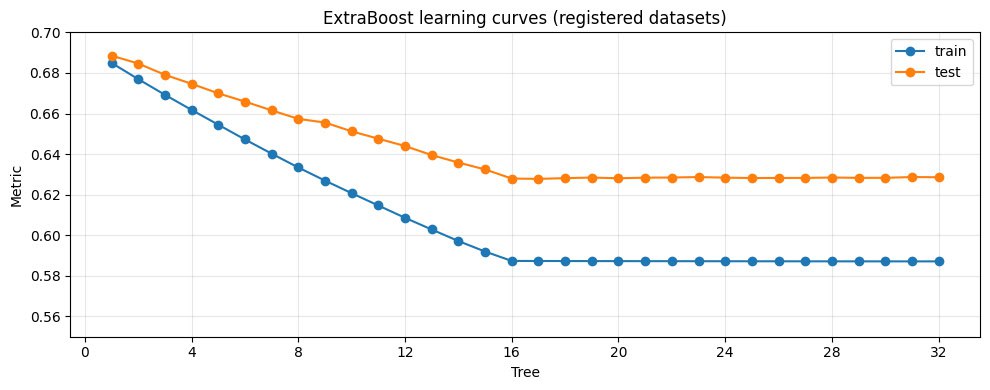

Now to the picture. This is a learning curve: the dependence of loss on the number of stages (i.e., how many trees are already in the model). I drew this plot mostly for debugging. I expected the loss to drop for the first 16 steps, and my initial results didn’t match because of a few bugs. Now the curves look OK at first glance — but there are a couple of interesting details worth staring at.

Loss drops on train for steps 1…16 — then stops

For points 1…16, the train loss steadily goes down. After that it mostly stops — which is exactly what I expected.

At each stage I’m using a decision stump (a tree of height 1). Such a tree effectively uses one feature per step. Each new feature can add new information and reduce the loss. Once the useful variables are exhausted, there’s nothing left to squeeze out.

The train–test gap is huge

What I don’t fully understand is the big gap between train and test. It looks like overfitting.

It might be interesting to run the same setup with different parameters and see whether the gap can be reduced (learning rate / regularization / subsampling / minimum leaf size — all the usual knobs).

A weird flat segment on the test curve around steps 8→9

Another thing: on train, the loss decreases at each step. But on test, there’s an almost horizontal segment between the 8th and 9th points. Why?

My first guess: the first 8 trees mostly exploit one group of features, and around step 9 the model “switches” and starts using the other group for the first time. But then the question becomes: why do those features generalize worse? Are they weaker, noisier, more correlated, or do they interact with the train/test split in a strange way?

So many interesting questions.